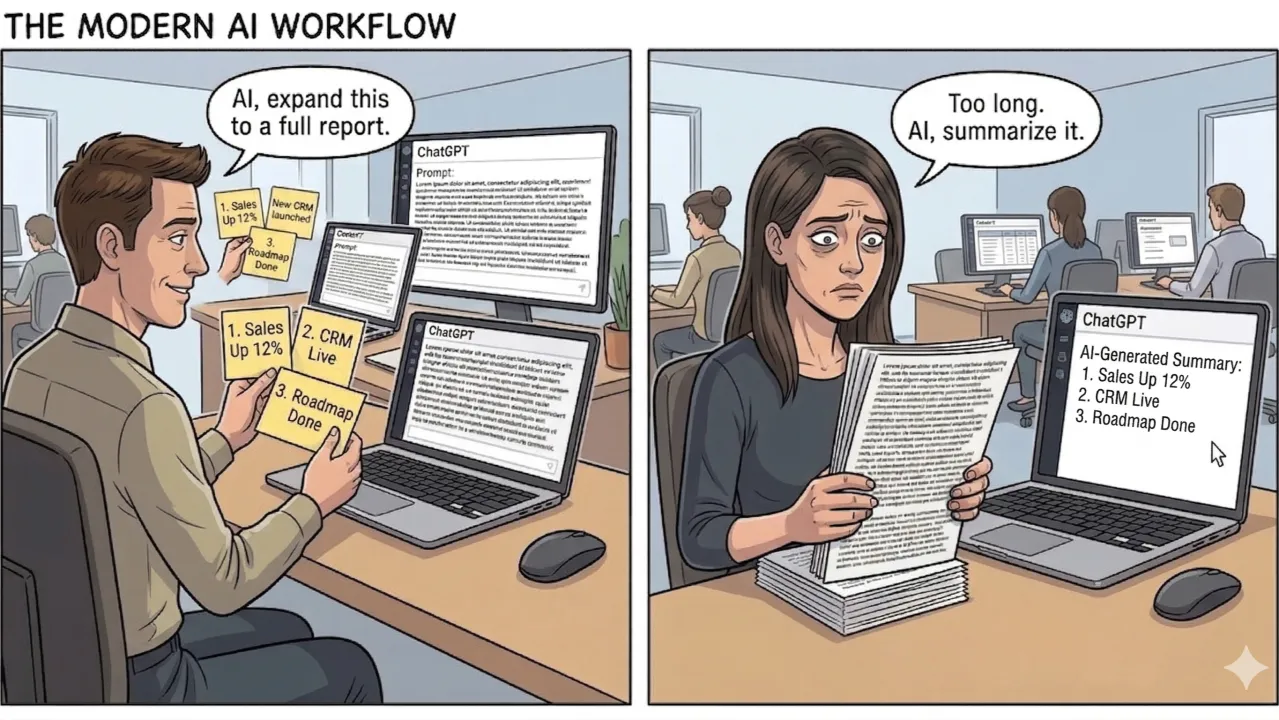

Here's a scene playing out in thousands of teams right now:

Two AI calls. Zero thinking transferred. A perfectly efficient system for communicating almost nothing.

We laugh, but the joke lands because we've all been on one side of it. And the real problem isn't the wasted tokens, it's what's missing from the middle.

The author had reasons for those three bullets and depth behind each. They knew which one mattered most. They knew the second one was controversial. They had context from a customer call that shaped the third. None of that made it into the polished doc even though the document looked done.

The recipient, reading something that looked done, treated it as if it were authored with human intent. They didn't push back, didn't ask questions, didn't engage with the thinking because the artifact presented no thinking to engage with. Just conclusions, wrapped in confident prose that a machine wrote and a human sort of endorsed.

This is the new communication gap. It's not a lack of clarity; it's a lack of provenance. The person reading your work doesn't know what's yours and what's the machine's. They don't know what you wrestled with, what you rejected, or where your judgment actually shaped the output. And without that, they can't do their job, which is to bring their own context and judgment to the thing you made.

The ironic inversion

Here's what's strange about this moment: AI reasoning is becoming more visible while human reasoning is becoming less visible.

Models now ship with chain-of-thought, reasoning traces, "thinking" blocks. You can literally watch the machine show its work.

But the human? The person who chose this framing over that one, who rejected the first approach because they knew something about the system the AI didn't, who re-prompted a dozen times before the output matched what they actually meant? Their thinking has no artifact. No format. No convention. No place to live.

The AI's reasoning is now more recoverable than the human's. If someone ships code and the model used chain-of-thought, you can at least reconstruct some of the AI's path. The human's twelve prompt iterations and the approach they rejected? Gone.

We've arrived at a world where the machine shows its work and the person doesn't.

Why this breaks teams

For solo work, the invisible thinking problem is fine. You remember your own reasoning. You were there.

For teams, it's corrosive. And it compounds.

Solo AI works because context is unified in one head. The person is the memory layer. Teams break because the faster individuals move with AI, the more invisible their reasoning becomes to everyone else. AI-generated output accelerates this because it looks finished. Polish forecloses the questions that rougher work would naturally invite.

When you get a half-formed sketch from a teammate, you naturally ask: "What are you thinking here?" When you get a polished document, you assume the thinking is done. You might disagree with the conclusion, but you engage with it as a conclusion and not as a draft of someone's reasoning that needs your input.

The fastest approach (for an author) is to just copy the AI output, and throw it over the wall. It's tempting because the output looks finished. The code compiles. The doc is well-structured. BUT there's no obvious signal that the person who sent it spent three minutes with it or three hours.

When you send too quickly, you're not just skipping your own quality check, you're transferring the cognitive burden to everyone downstream. Someone else now has to figure out whether this thing is good, whether it fits, whether the assumptions behind it hold. You had that context. You just didn't share it.

And here's the trust spiral: once people get burned by polished-but-hollow work a few times, they stop engaging carefully with any AI-assisted output. The team's collective quality bar drops, not because the AI got worse, but because nobody trusts the human layer anymore.

What to do about it

This isn't a problem you solve with a policy memo. It's a set of small habits that change how your team communicates. Here are four that work:

1. Label the provenance.

Start marking your internal docs and artifacts as AI-generated, human-generated, or human-edited. It sounds almost too simple, but try it for a week. What changes is how your team reads. When people know a doc was AI-assisted, they bring more skepticism to the structure and more attention to whether the reasoning holds. When they know it was human-written, they trust that there's intention behind it and can engage more deeply. The label doesn't slow anything down. It just restores a signal that AI quietly removed.

A lightweight version: just add a line at the top. "Drafted with Claude, edited and restructured by me. The recommendations in section 3 are mine; the market analysis is mostly AI-generated."

2. Annotate your reasoning, not just your output.

The opposite of slinging slop isn't perfecting, it's annotating. When you share AI-assisted work, include a few lines about your decisions:

- "I asked for three approaches and picked this one because X"

- "The AI suggested including Y but I cut it because we tried that last quarter and it didn't move the needle"

- "I'm least confident in the pricing section; that needs someone with more context on enterprise deals"

This takes sixty seconds and transforms how the next person engages. They're no longer evaluating a finished artifact, they're joining a conversation about decisions, with enough context to add their own judgment.

3. Share the intent, not just the conclusion.

Write your plans before your implementations. Not a polished spec but a working doc. The problem you're solving, the approaches you considered, the one you picked and why. Put it next to the code or presentation. Share it before you start building.

If you already do this, try giving each other feedback on these docs before jumping to implementation. You'll be surprised how much misalignment surfaces in five minutes of reading someone else's reasoning.

4. Make "show your work" a team norm, not a personal virtue.

"Show your work" used to mean math class. Now it means: show the decisions you made on top of what the AI gave you. This only works if it's a team norm, not something one conscientious person does.

In practice: PRs include a sentence about which parts were AI-generated and what the human shaped. Design docs have a "decisions made" section. Slack threads about AI-generated analysis include what the person checked and what they didn't.

The format doesn't matter. What matters is the habit of making human judgment visible alongside the AI output. Because your teammates need your thinking to do their jobs.

The team that shares thinking compounds

Here's the payoff for getting this right: the team that shares thinking compounds its intelligence. Every artifact carries context. Every handoff includes reasoning. The next person doesn't start from zero; they start from where you left off, with enough understanding to build on your judgment instead of just reacting to your output.

The team that shares only output stays flat. Each person re-derives the context. Each handoff loses information. The AI gets better every month, but the team's collective understanding doesn't improve because nobody's thinking is making it into the shared record.

You have three bullet points. Instead of inflating them into a polished doc, you share the bullets, with a line about why each one matters, what you're not sure about, and what you need from your team.

Your team reads it in two minutes instead of twenty. They respond with their own context. A real conversation happens.

No AI required.

(This article was generated using Claude against a corpus of draft ideas and transcripts of conversations and monologues I've had over the past year. I did 4 passes of prompting on the overall doc, and 4 passes of prompting on the comic image. I then read and hand-edited the entire document.)

Older

Turning a White-Glove Process Into a Self-Serve Workflow

Newer

The PRFAQ Is Dead. Long Live the Product Landing Page.

Newsletter

Get new posts in your inbox

Bring your team together to build better products. Fresh takes on remote collaboration and AI-driven development.