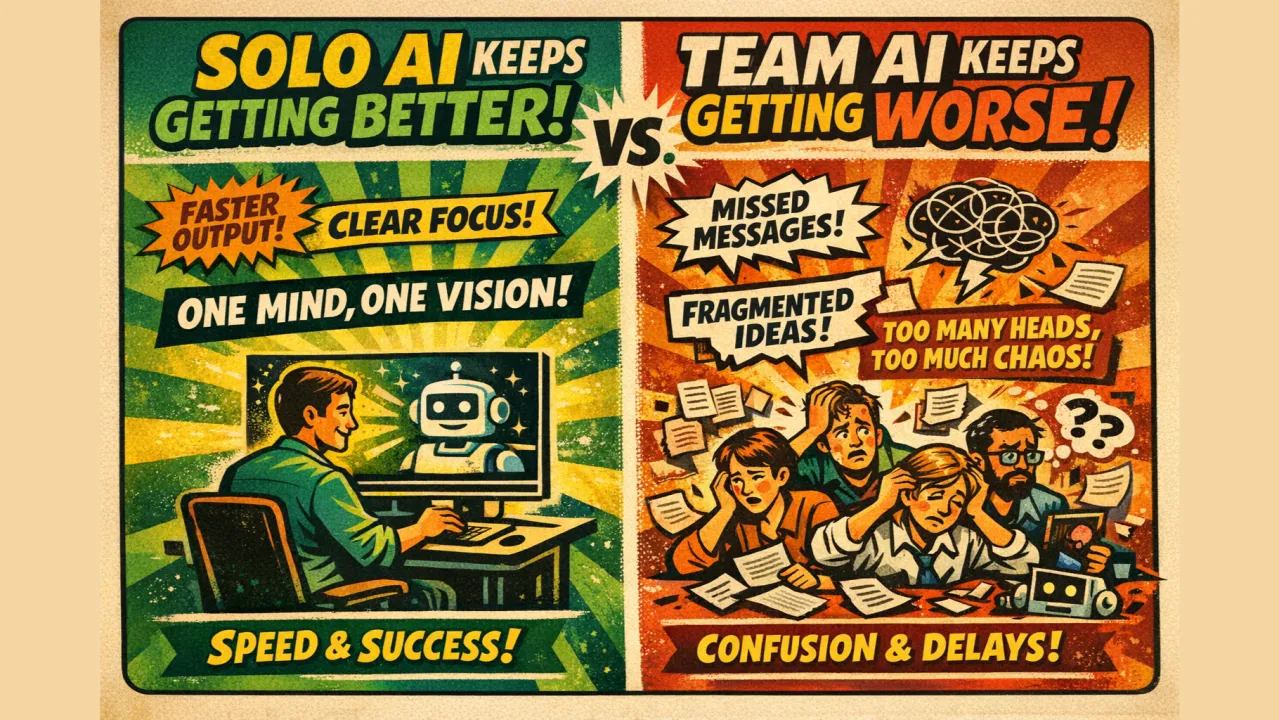

Solo AI keeps getting better. Every month the tools improve with faster models, better context windows, smarter agents. A single person with a clear idea can turn it into working software in an afternoon. That loop is getting tighter and tighter, and there's no ceiling in sight.

Team AI keeps getting worse. Not because the tools are bad, but because the faster individuals move, the more visible the gaps between them become. Decisions get made in private sessions. Context lives in one person's head. The team's shared understanding fragments a little more every week. AI didn't create this problem, but it's accelerating it.

Here's what we've been sitting with at SpecStory.

A tight team should beat a solo operator. Not because of more hands but because of more angles on the same problem. Greg talked to users the others didn't. I have context from sales calls that never made it into a doc. Sean knows which part of the system will buckle under a particular design choice. No one has the full picture. Everyone has a piece. And even though we're all coding, we need to align on something higher level than the code.

That diversity of context is a genuine multiplier if it can actually be harnessed. Right now, it can't. It's trapped in people's heads, scattered across Slack threads, buried in meetings that ended without a record. The conversations where the real thinking happened are gone by the time anyone needs them.

So what happens instead? Each one of us tries to hold it all together. We become the memory for our team, trying to preserve every insight, tradeoff, and decision. When it was just three of us, that worked (barely). Now it's breaking as we approach ten. And while all we want to do is build and sell, we're now spending half our time revisiting or re-explaining context we've already built up but just can't find anymore.

A lot of people reach for "new workflows" as the answer. More check-ins. Better handoff docs. Daily standups with AI on the agenda. We resist these reflexively.

These help at the margins. But they're treating the symptom rather than the cause: the intent that produced the work was never captured as a shared artifact in the first place.

The habit shift that actually matters is treating intent as something the whole team owns.

Not just prompts, whole conversations. The sync where someone pushed back on the original approach. The design review where a tradeoff got made. The customer call that reframed the whole problem. That context exists. It just evaporates the moment a meeting ends, inaccessible to the next person who needs it and invisible to the agents that could use it.

One concrete thing we do to mitigate this is to write our plans in markdown and share them. Just a working doc, not a polished spec. The problem we're solving, the approaches we've considered, the one we picked and why. Then we show it to each other. This is an easy habit to adopt, and if you already have, try going one step further and give each other feedback on these docs (not as an implementation gate, just as a way to build shared understanding and improve). You'd be surprised how much misalignment surfaces in five minutes of reading someone else's reasoning.

As we started doing this, we noticed a related trust problem that snuck up on us.

As more of our output, including these plans, were written with AI assistance, reading became not only a bottleneck but also required a new lens. Is this doc the product of an hour of thinking, or sixty seconds of prompting? Did someone shape this, or just ship it? The artifact often looks the same either way. And when we couldn't tell, we started discounting each others' work (or worse, we stopped reading with care).

A simple fix if you're hitting this same problem: label your internal docs as AI-generated, human-generated, or both. Give some indiction of how much time and effort you spent iterating on them. It sounds almost too simple, but try it for a week. What changes is how your team reads. When people know a doc was AI-generated, they engage with it differently by bringing more skepticism to the content and more attention to whether the reasoning holds. When they know it was human-written, they trust the judgment (or at least the intent) behind it more. The label doesn't slow anything down. It just restores a signal that AI quietly removed.

The team AI problem isn't a tools problem. It's a context problem. How do you make the distributed knowledge of a team like reasoning, tradeoffs, and context available to the people and agents doing the work?

We don't have a complete answer yet. But we're increasingly convinced the path runs through intent. Not capturing what got shipped, but why. Conversations as a team resource. Context as something you manage deliberately, the way you manage code.

Solo AI is simple. One person, one mental model, clean execution.

Team AI is harder. Multiple people, distributed intent, conversations that need to compound instead of evaporate.

That's the frontier we're building toward. See more at https://withstoa.com

Older

Please Review the Safety Card Before Airdropping Your Prototype

Newer

Turning a White-Glove Process Into a Self-Serve Workflow

Newsletter

Get new posts in your inbox

Bring your team together to build better products. Fresh takes on remote collaboration and AI-driven development.