What Cognitive Science says about Managing AI Coding Agents

Executive Summary

Modern developers managing AI coding agents are engaged in a fundamentally new type of cognitive work—one that combines elements of people management with technical deep work in ways that have no historical precedent. This analysis synthesizes research from cognitive psychology (dual-task performance, bottleneck theory), management science (span of control, nature of managerial work), and emerging empirical studies on AI-assisted development to understand the implications for productivity, team structure, and tool design.

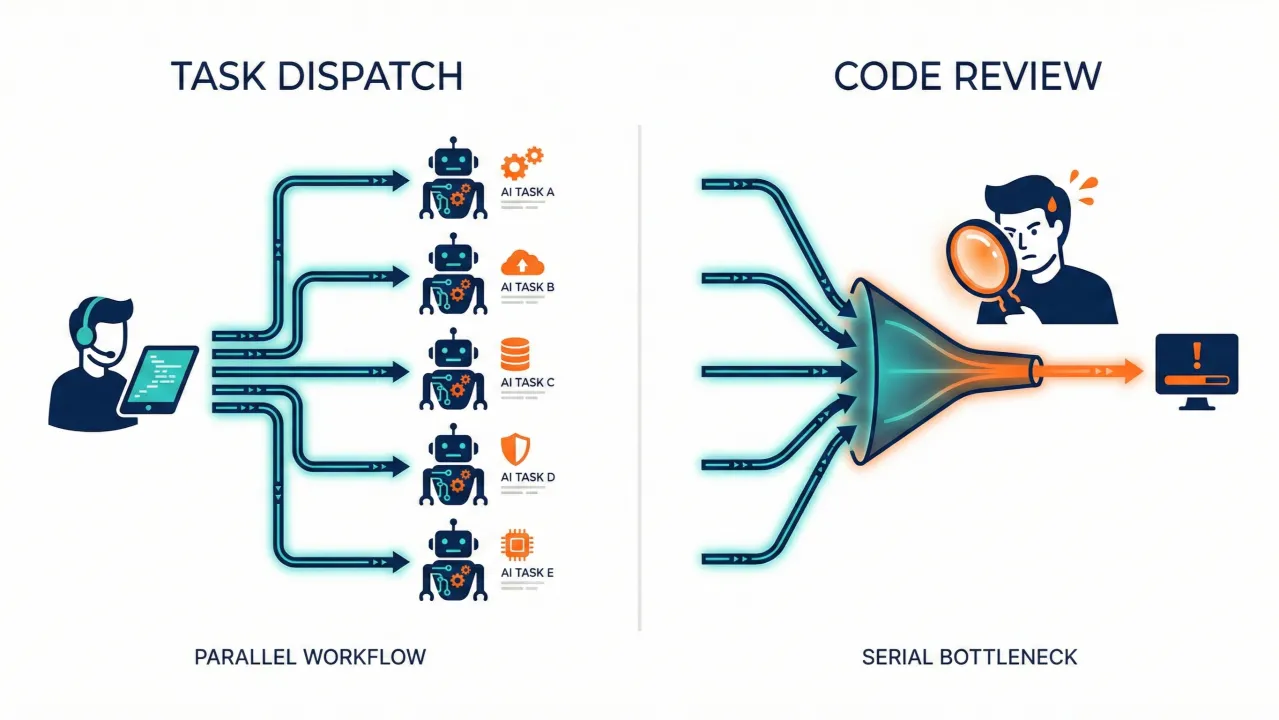

The central finding: managing AI agents creates a workflow that oscillates between two cognitively distinct modes—a shallow "dispatch" mode amenable to parallelization and a deep "review" mode that is strictly serial and bottleneck-bound. Success depends on recognizing this duality and building systems that externalize intent across parallel workstreams.

Part 1: The Nature of Managerial Work

Mintzbergs Foundational Insight

Henry Mintzberg's The Nature of Managerial Work (1973) fundamentally challenged assumptions about what managers actually do. Through direct observation, he found that managerial work is characterized by:

- Brevity: The median time spent on any single issue was shockingly short

- Variety: Managers handle many different types of issues in rapid succession

- Fragmentation: Work is constantly interrupted; sustained focus is rare

- Preference for verbal communication: Managers favor live interaction over written documents

- Reactive orientation: Responding to immediate demands rather than strategic contemplation

Tengblad's 2006 replication study, conducted 30 years later, found the basic pattern held—managers still worked at an unrelenting pace through brief, varied activities. The main differences were more emphasis on group interactions with subordinates and somewhat less extreme fragmentation.

Why This Matters for Cognitive Load

Mintzberg's description maps directly onto what cognitive scientists call "supervisory cognition"—a mode of processing that draws on verbal communication, social judgment, and rapid task-set reconfiguration. Critically, for most managerial interactions, the depth of novel problem-solving is relatively low. Asking "what's blocking you?" and responding "try X, loop in Y if that doesn't work" engages working memory briefly, makes a pattern-match to prior experience, and moves on.

In terms of the Problem State Bottleneck (Borst et al., 2010), most managerial interactions don't deeply engage the problem-state slot. This is why managers can handle many brief interactions without catastrophic performance degradation—each interaction is relatively self-contained and draws on practiced schemas rather than requiring novel problem construction.

Part 2: Span of Control and Employee Experience Level

The Core Finding

The span of control literature converges on a critical insight: the key variable isn't the raw number of direct reports—it's the cognitive demand per report. The factors that determine appropriate span of control include:

- Task complexity: Routine, standardized work allows wider spans; complex, judgment-intensive work requires narrower spans

- Employee experience level: Senior staff need less oversight; junior staff need more

- Manager role: "Producing managers" who do their own technical work can supervise fewer people than pure managers

Junior vs. Senior Staff: Fundamentally Different Cognitive Loads

Managing junior staff typically requires a narrow span of control (4-6 direct reports) because juniors demand:

- Frequent directive interactions (not just answering questions, but framing problems)

- Detailed review of output (more errors, missed edge cases, need for architectural guidance)

- Heavy context loading for each interaction (cannot assume they've considered relevant factors)

In cognitive terms: reviewing a junior's work engages the problem-state bottleneck heavily. The manager must load full context, mentally simulate execution, and check for issues at multiple levels.

Managing senior staff allows a much wider span (10+ reports) because seniors require:

- Occasional strategic check-ins (they self-direct between interactions)

- Light review of output (trust in patterns; checking for alignment, not correctness)

- Less context loading per interaction (shared mental models, shared vocabulary)

In cognitive terms: reviewing a senior's work is more like monitoring—scanning for misalignment with broader intent, which can be done with less deep engagement of the central bottleneck.

Part 3: AI Coding Agents as an Unprecedented Hybrid

Neither Junior Nor Senior Developer

Current AI coding agents present a cognitive load profile unlike any human report. They combine characteristics of both junior and senior workers in ways that create new challenges.

Like ultra-productive juniors:

- Need clear, specific instructions to perform well

- Produce work that requires real review (cannot be trusted implicitly)

- Don't understand broader architectural context the way a senior colleague would

- May introduce subtle bugs or misalignments that require expert detection

Unlike any human report:

- No 1:1s, motivation, career development, or emotional support needed

- Don't get blocked by organizational politics

- Work continuously without breaks

- Dispatch cost is minimal (typing a prompt vs. conducting a standup)

- Can be parallelized without coordination overhead between agents

The Two-Phase Cognitive Profile

Managing AI agents breaks into two distinct phases with very different cognitive properties:

Phase 1: Task Specification and Dispatch

This is relatively shallow work. The developer translates intent into instructions, drawing on existing mental models of the codebase and problem domain. For experienced developers, this maps onto what Sarkar (2025) calls "planning-style instructions"—structured, goal-oriented prompts that leverage existing schemas.

Dispatch can be interleaved with other work because each dispatch is a brief verbal-output task that doesn't hold the problem-state slot for extended periods. Multiple agents can be dispatched in sequence without catastrophic interference. This phase resembles Mintzberg's managerial work: brief, varied, action-oriented.

Phase 2: Output Review and Integration

This is unambiguously deep work. Code review research consistently shows it's among the most cognitively demanding activities in software engineering. Baum (2019) and Bacchelli & Bird (2013) document that review requires loading someone else's mental model into working memory—their architectural choices, naming conventions, edge cases considered, and assumptions made.

One study found developers spend 58% of maintenance time simply understanding existing code before making changes. Review demands this same cognitive effort compressed into a shorter window.

This phase engages the central bottleneck fully. You cannot meaningfully review two pull requests simultaneously. The problem-state slot is occupied, and attempting to interleave review tasks will incur the full dual-task costs documented in the cognitive literature (20-40% performance degradation, increased errors, attention residue effects).

Part 4: Empirical Evidence from AI-Assisted Development

The Faros AI Study (2025)

Analysis of telemetry from 10,000+ developers across 1,255 teams found:

- Teams with high AI adoption completed 21% more tasks and merged 98% more pull requests

- However, PR review time increased 91%—the bottleneck shifted from production to review

- AI adoption was associated with a 9% increase in bugs per developer and 154% increase in average PR size

- Developers were interacting with 9% more tasks and 47% more pull requests per day

The study explicitly noted: "Historically, context switching has been viewed as a negative indicator, correlated with cognitive overload and reduced focus. AI is shifting that benchmark... developers are not just writing code—they are initiating, unblocking, and validating AI-generated contributions across multiple workstreams."

Sarkars Higher-Order Thinking Study (2025)

Suproteem Sarkar's SSRN paper analyzing 323,589 code merges across 32 companies found:

- Software output increased 39% after agents became the default code generation mode

- More experienced workers accept agent-generated code 6% more often than junior colleagues

- Experienced workers give clearer, planning-style instructions, improving alignment with intent

- This positive experience gradient for agents contrasts with autocomplete, where juniors benefit more

The study concludes: "Agents may shift the production process from the syntactic activity of typing code to the semantic activity of instructing and evaluating agents... abstraction, clarity, and evaluation may be important skills for workers."

The 70% Problem (Osmani)

Addy Osmani's widely-cited observation captures the senior/junior divide:

- AI coding assistants can get you 70% of the way to a solution

- For seniors, the last 30% is where their expertise shines—they can efficiently evaluate and complete the work

- For juniors, the last 30% is often slower than writing it themselves because they can't reliably evaluate what they're looking at

This aligns with the cognitive science of expertise: seniors have built deep schemas through years of practice that enable faster pattern-matching during review. The bottleneck is still present, but they process through it more quickly (stage shortening). Juniors lack these schemas and cannot distinguish correct-looking code from subtly wrong code.

Part 5: The Producing Manager Problem

The Hardest Role in Management

The span of control literature identifies the "producing manager"—someone who splits time between managing others and doing their own technical work—as occupying the most cognitively demanding role. When managers must also do individual contributor work:

- Span of control should be narrower than pure managers

- They face constant switching between incompatible cognitive modes

- The fragmented, reactive supervisory mode conflicts with the deep, sustained problem-solving mode

Developers as Producing Managers of Agents

A developer managing coding agents is essentially a producing manager. They're attempting to:

- Do their own deep thinking: Architecture decisions, system design, understanding user intent

- Simultaneously supervise autonomous workers: Dispatching tasks, checking status, reviewing output, integrating results

The dual-tasking research predicts exactly what the empirical data shows: throughput increases, but quality pressure rises, and the bottleneck shifts from production to review and integration.

Simon Willison captured this dynamic in his Pragmatic Engineer article:

"I was pretty skeptical about this at first. AI-generated code needs to be reviewed, which means the natural bottleneck on all of this is how fast I can review the results... Despite my misgivings, over the past few weeks I've noticed myself quietly starting to embrace the parallel coding agent lifestyle. I can only focus on reviewing and landing one significant change at a time, but I'm finding an increasing number of tasks that can still be fired off in parallel without adding too much cognitive overhead to my primary work."

This maps perfectly onto the research: dispatch is shallow work, review is deep work, and you can layer shallow tasks around a deep task if they don't compete for the same bottleneck resources.

Part 6: Implications for Practice and Tool Design

What Works: Leveraging the Two-Phase Structure

For individual developers:

- Recognize that dispatch and review are cognitively distinct—don't try to interleave reviews

- Batch agent dispatches during natural breaks in deep work

- Use the deep work period for the most cognitively demanding review task; dispatch to agents while reviewing output from a previous dispatch

- Senior developers should embrace agent management; juniors should be cautious about over-reliance

For engineering managers:

- Expect review bottlenecks to intensify as agent adoption increases

- Consider dedicated "review specialist" roles or time allocations

- Recognize that agents shift the constraint from coding speed to evaluation speed

- Junior developers may need more support, not less, in an agent-heavy environment

For tool designers:

- The biggest cost isn't dispatching or reviewing individual outputs—it's maintaining coherent intent across parallel workstreams

- Developers running 4-8 agents simultaneously need to track not just what each is doing, but how pieces fit together and what the original user need was

- Systems that externalize intent—preserving the "why" behind each dispatch, tracking relationships between workstreams, providing context for efficient review—fill a critical gap

- Traditional project management tools aren't built for this; the "intent layer" is missing

What Doesnt Work: Ignoring the Bottleneck

Common failure modes include:

- Assuming agents eliminate cognitive load: They shift it from production to review, often intensifying it

- Treating all agent interaction as equivalent: Dispatch is cheap; review is expensive

- Expecting juniors to benefit equally: The experience gradient runs opposite to autocomplete tools

- Ignoring review time in productivity calculations: The 91% increase in review time is real cost that doesn't appear in "tasks completed" metrics

Part 7: Future Research Questions

- Training effects for agent management: Can developers be trained to review agent output more efficiently, similar to stage-shortening effects in dual-task training? Or does the novelty of each review task prevent automatization?

- Optimal agent parallelization: What is the empirically optimal number of concurrent agents given human review bottlenecks? How does this vary by developer experience?

- Intent preservation systems: What tool designs most effectively support intent maintenance across parallel agent workstreams? How should context be captured and presented to minimize review cognitive load?

- Junior developer development: If AI handles tasks that traditionally trained juniors, how do they develop the schemas needed for effective review? What new training approaches are needed?

- Age effects: Given that older adults show reduced capacity for bottleneck bypass in dual-task training, are there age-related differences in adapting to agent management workflows?

Key References

Cognitive Science: Dual-Task Performance and Bottlenecks

Borst, J. P., Taatgen, N. A., & Van Rijn, H. (2010). The problem state: A cognitive bottleneck in multitasking. Journal of Experimental Psychology: Learning, Memory, and Cognition, 36(2), 363-382.

Pashler, H. (1994). Dual-task interference in simple tasks: Data and theory. Psychological Bulletin, 116(2), 220-244.

Leroy, S. (2009). Why is it so hard to do my work? The challenge of attention residue when switching between work tasks. Organizational Behavior and Human Decision Processes, 109(2), 168-181.

Newport, C. (2016). Deep Work: Rules for Focused Success in a Distracted World. Grand Central Publishing.

Management Science: Span of Control and Managerial Work

Mintzberg, H. (1973). The Nature of Managerial Work. Harper & Row.

Mintzberg, H. (2009). Managing. Berrett-Koehler Publishers.

Tengblad, S. (2006). Is there a "new managerial work"? A comparison with Henry Mintzberg's classic study 30 years later. Journal of Management Studies, 43(7), 1437-1461.

Bandiera, O., Guiso, L., Prat, A., & Sadun, R. (2012). Span of control and span of attention. Harvard Business School Working Paper, 12-053.

Code Review and Developer Cognition

Bacchelli, A., & Bird, C. (2013). Expectations, outcomes, and challenges of modern code review. Proceedings of the International Conference on Software Engineering (ICSE).

Baum, T. (2019). The cognitive aspects of code review. Empirical Software Engineering.

Mayer, R. E., & Moreno, R. (2003). Nine ways to reduce cognitive load in multimedia learning. Educational Psychologist, 38(1), 43-52.

AI-Assisted Development: Empirical Studies

Sarkar, S. K. (2025). AI agents, productivity, and higher-order thinking: Early evidence from software development. SSRN Working Paper.

Faros AI. (2025). The AI productivity paradox research report. https://www.faros.ai/blog/ai-software-engineering

Willison, S. (2025). New trend: Programming by kicking off parallel AI agents. The Pragmatic Engineer.

Osmani, A. (2025). The 70% problem with AI coding assistants. Pragmatic Engineer.

AI and Workforce Implications

IEEE Spectrum. (2025). AI shifts expectations for entry level jobs. December 2025.

arxiv. (2025). Coding with AI: From a reflection on industrial practices to future computer science and software engineering education. arXiv:2512.23982.

Pajo et al. (2025). Towards decoding developer cognition in the age of AI assistants. arXiv:2501.02684.

Analysis prepared February 2026. Synthesizes cognitive science research on dual-task performance with management literature and emerging empirical evidence on AI-assisted software development.

Older

Planning Work for Our Single-Threaded Brains

Newer

Why Your Product Decisions Keep Getting Relitigated

Newsletter

Get new posts in your inbox

Bring your team together to build better products. Fresh takes on remote collaboration and AI-driven development.